Divider

Architectural Diffusion

↝ Year

2022

↝ Description

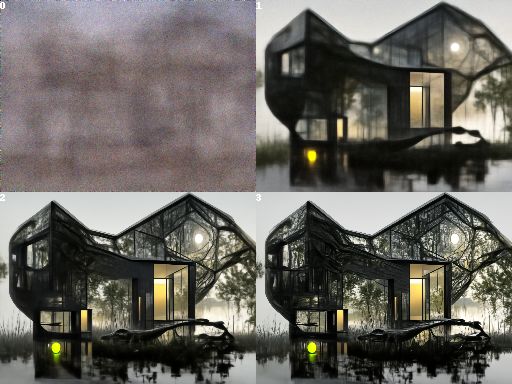

A few years before AI image generation really was a thing, I fine-trained the OpenAI 512x512 diffusion model on 30.000 images of the AIDA architecture database of the Harvard dataverse, with more or less success. For higher resolution results, I used Topaz Gigapixel AI to upscale all 30k training images to 512x512. I let the training run for 1.5 million iterations, after which the model seemed to overfit and you could see upscaling artifacts more clearly while quality did not improve.

If you are interested in giving my fine-tuned model a whirl, just head to the DiscoStream Colab notebook and select Architecture_Diffusion_1-5m →

↝ Tools used

Python

Topaz Gigapixel

Disco Diffusion

Divider

Modern architecture 1, Fine-trained model by me

Modern architecture 1, OpenAI unconditional model

Modern architecture 2, Fine-trained model by me

Modern architecture 2, OpenAI unconditional model

Modern architecture 3, Fine-trained model by me

Modern architecture 3, OpenAI unconditional model

Modern architecture 4, Fine-trained model by me

Modern architecture 4, OpenAI unconditional model

Modern jungle bio-architecture, Fine-trained model by me

Modern jungle bio-architecture, Diffusion steps visualisation

Divider

← Go Back

Divider