Stable AI

↝ Year

2022 - 2024

↝ Description

Note: Building these pipelines to direct AI for client projects was done before the widespread adoption and ease of use of image generation.

Exploring and experimenting with StabilityAI's diffusion models, namely Stable Diffusion. What started as experiments two years ago turned into various client projects in the past few months, be it matching image styles for a client's brand timeline, enhancing 3D renders using the newest techniques, or creating custom models for a project's specific needs.

↝ Tools used

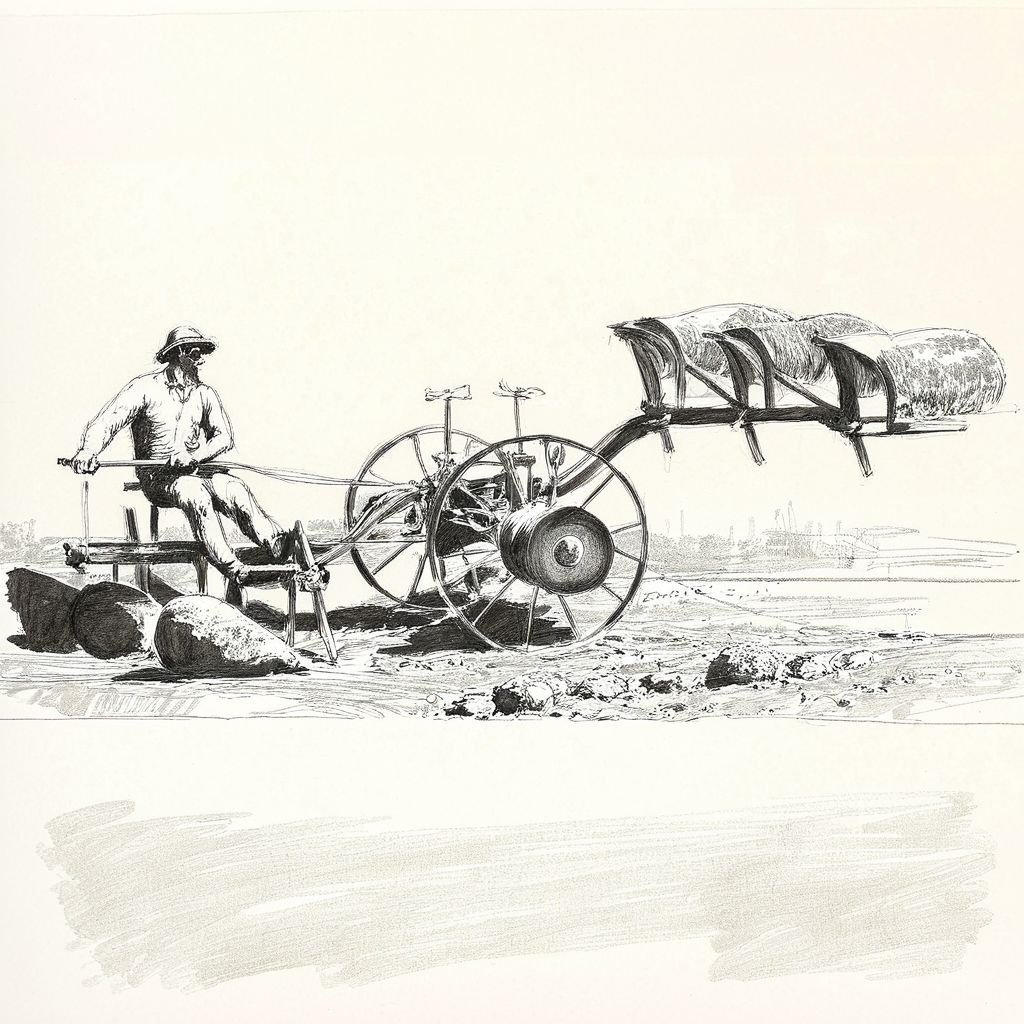

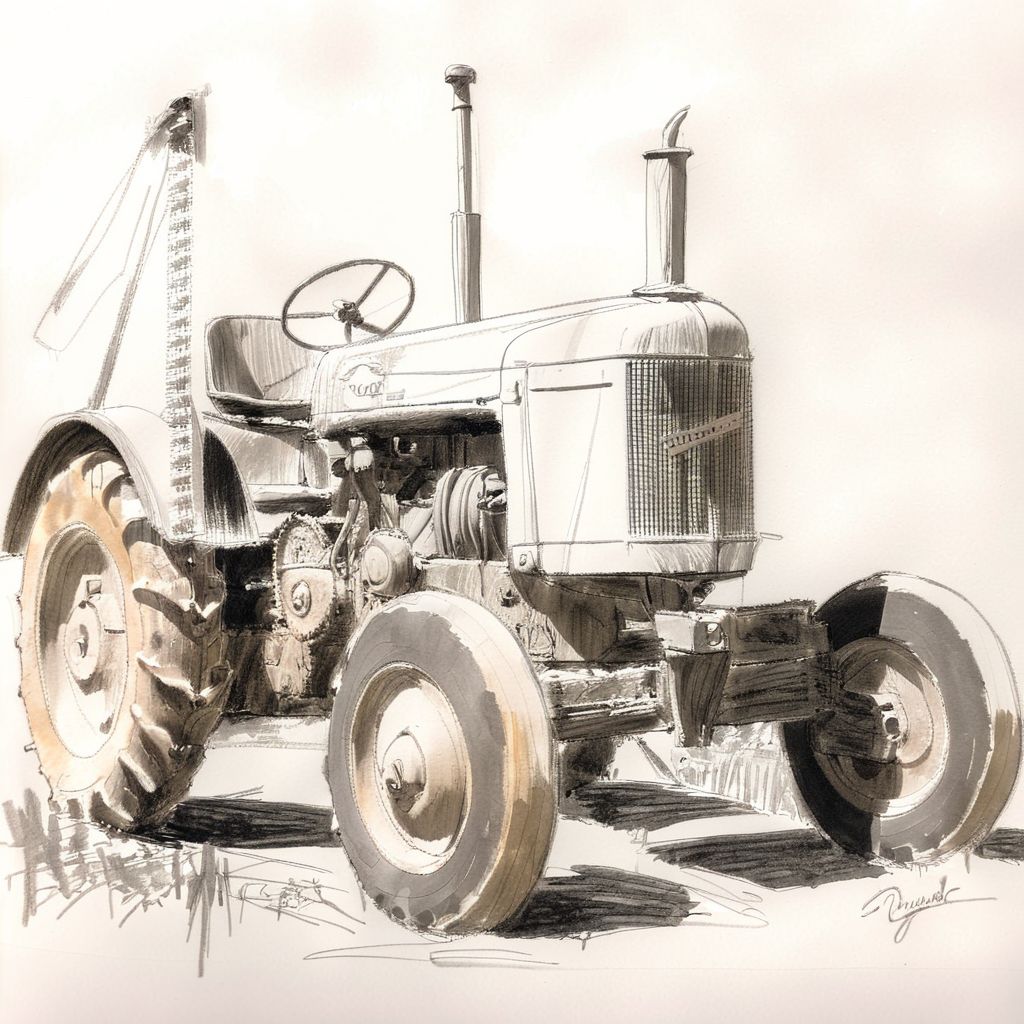

For a client's brand timeline, The style needed to match the moodboard given, as well as some objects that were too obscure for the AI to know them. Using bleeding-edge techniques, I was able to create a workflow that allowed me to match the given style to overdraw images of obscure objects or generate images from scratch.

Back in early 2023, it was still a challenge to get AI to follow the schematics of an already given image. ControlNet was just released and nobody really knew or understood it all too well, so when I was asked to generate abstracted artwork reminiscent of the Brandenburg Gate, I took that chance to test out the various different ControlNet approaches.

Personal Experiments

When a new image generation model was released by a German startup in August 2024, I immediately jumped on the experimental support for it in ComfyUI to see whether it is useful for current or even future projects. Realizing that the model was a huge step up in prompt comprehension as well as generating text, I tried out some diverse range of possible applications to see in which use cases it would be of help - and it fared incredibly well.

Even in the Disco Diffusion days (where you needed an A100 graphics card and about 30 minutes to generate a single picture, those were the times), people were already trying out to generate some form of videos with AI. Come Stable Diffusion which made generating a lot more resource friendly, I tried to do something similarly to how video generation worked then: A video in which the camera moves slightly while the prompt changes every few seconds to make the sense of travelling across the world.

Of course the result isn't - contrary to the name of the model - stable, as it creates a new image for every single frame, so keep in mind this was before any of the fancy video capabilities seen in the last years.